When we read a medieval text (or one from many other periods), we read a reconstruction that represents the work of modern editors (even an authorial autograph has errors, corrections and corrigenda; in most cases textual witnesses are several steps removed from the author). While there are many methods to achieve what one believes is the ‘best’ text, which can be variously defined, for pre-modern works perhaps the two best-known and most prominent (in part because they are seen to define opposite ends of the spectrum) are 1) the stemmatic method (associated with Karl Lachmann) and 2) the ‘best manuscript’ or codex optimus tradition (represented by Joseph Bédier). The second endeavours to locate a good manuscript (or witness), emend minimally when necessary and if possible based on consultation with other manuscripts. This is essentailly parallel to copy-text editing, variations of which dominated twentieth-century editorial theory and practice for modern works in the Anglophone world. The first attempts to take all the witnesses, e.g. manuscripts, of a text and by comparing and evaluating these (based most importantly on the presence or absence of significant errors) reconstructs an archetype. Depending on the stance of the editor and the text itself, this archetype may claim to approximate the authorial text or alternatively may be the best that one can reconstruct based on what survives.

When we read a medieval text (or one from many other periods), we read a reconstruction that represents the work of modern editors (even an authorial autograph has errors, corrections and corrigenda; in most cases textual witnesses are several steps removed from the author). While there are many methods to achieve what one believes is the ‘best’ text, which can be variously defined, for pre-modern works perhaps the two best-known and most prominent (in part because they are seen to define opposite ends of the spectrum) are 1) the stemmatic method (associated with Karl Lachmann) and 2) the ‘best manuscript’ or codex optimus tradition (represented by Joseph Bédier). The second endeavours to locate a good manuscript (or witness), emend minimally when necessary and if possible based on consultation with other manuscripts. This is essentailly parallel to copy-text editing, variations of which dominated twentieth-century editorial theory and practice for modern works in the Anglophone world. The first attempts to take all the witnesses, e.g. manuscripts, of a text and by comparing and evaluating these (based most importantly on the presence or absence of significant errors) reconstructs an archetype. Depending on the stance of the editor and the text itself, this archetype may claim to approximate the authorial text or alternatively may be the best that one can reconstruct based on what survives.

Recently a growing number of scholarly editors have considered techniques from evolutionary biology in relation to textual transmission. Known as cladistics and/or phylogenetics, these methods, as one might guess, have drawn a fair amount of criticism from more traditional quarters.

However, it is not possible to test which of these methods (stemmatics or cladistics) is most accurate for reconstructing the entire textual history of a medieval work because among other things we don’t know how much has been lost, the number of steps between various manuscripts, etc. (I should note that, as I have been informed, in some cases, say among a group of professional copyists of a particular text, we can find a discrete part of a tradition that might be testable; for example, if a group of ten professional copyists all worked ultimately from one copy (A) of X-text, whether they copied directly from (A) or from another copyist, and were known not to have copied from outside witnesses, they might form a testable subset).

Given the problems in testing historical traditions, recent work has generated artificial traditions, ones in which we know the lines of transmission, in an effort to see where and how various models fail and/or succeed. As far as I know these have tended not to be printed in traditional Anglophonic medieval studies venues (think Speculum), but rather sometimes in continental European volumes and/or publications devoted to the use of computing in the humanities. For me, this means that they aren’t as prominent on my radar as they could be (In other words, I do flip through the table of contents of Speculum to see if there is anything of interest, but I don’t for better or worse regularly check literary computing journals for medieval content as a matter of habit), but now I am reading:

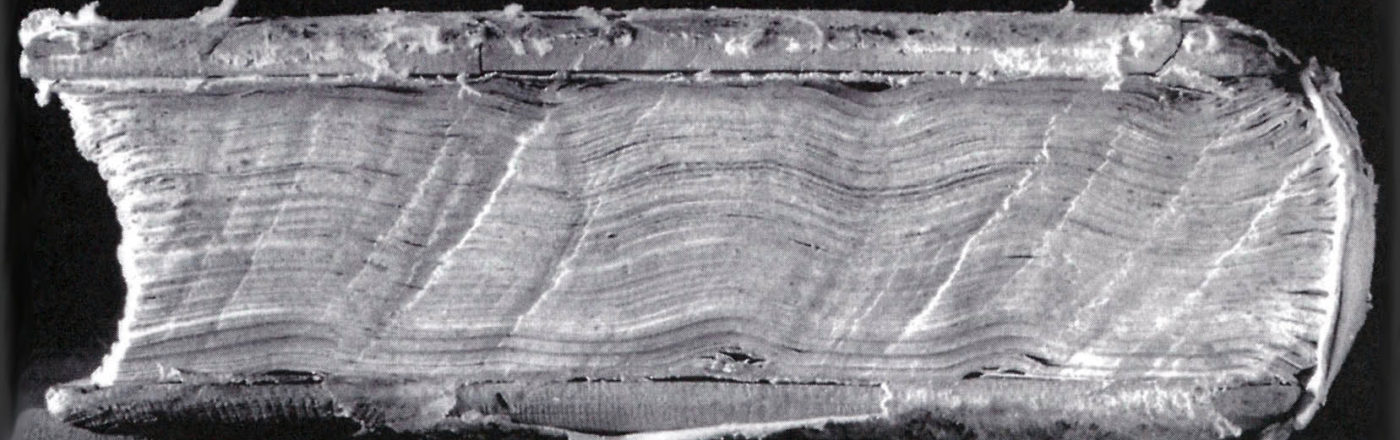

A large concern for the artifical traditions is the issue of ‘contamination’ or where a copyist, working from one exemplar incorporates readings from another witness/branch of the tradition. In very concrete terms, this might happen where a scribe begins copying from one manuscript but, finding that end of the text is damaged and missing, completes his/her copy by using a different manuscript. This has been seen as a problem both for cladistics and stemmatics. Complaints have been voiced about genetic computer models that do not allow textual branches to (re)join the tradition once they have split. A similar situation arises in stemmatics, where ‘contamination’ has long been recognized as a problem for the mechanical evaluation of variants, but can be indicated if/when the editor’s judgement discerns its effects. Editors frequently indicate where ‘contamination’ or inter-branch influence or vertical transmission, is believed to have ocurred with a dotted line (from the different tradition branch to the manuscript/group affected by the contamination) as in this example from Sebastiano Timpanaro’s discussion of the issue (The Genesis of Lachmann’s Method, edited and translated by Glenn W. Most (Chicago, 2005), p. 180; I’m very happy to have this translation, which was not available when I was doing coursework!):